A Brief Introduction to Artificial Intelligence

3 March 2020 by Johautt Hernández

Two decades ago, many of today's technologies would have seemed like pure fiction. Watching James Bond remotely call and drive his BMW 750iL using a pocket device — while the car talked back — we wondered: when would something like that actually be possible? What would the electronics and software need to look like?

Today's smartphones already outperform the most powerful desktop computers of that era. Combine one with adequate computing hardware, electromechanical actuators, and integrated software, and the vehicle from that film isn't far off. That's just one example of how dramatically the technological landscape has shifted.

One of the fields that has changed most profoundly is artificial intelligence.

Capabilities like computer vision, speech-to-text conversion, voice synthesis, superhuman game-playing, image generation from sparse data, and pattern detection for diagnosing physical and mental conditions are all realities today — and they are advancing faster than ever.

This post covers the key historical milestones in AI's development. A follow-up will break down the core concepts needed to understand how these systems actually work.

Milestones in Artificial Intelligence

The story begins in the mid-1930s, when Alan Turing conceptualized a device capable of simulating any computational algorithm — what we now call the Turing machine.

In 1950, Turing proposed an experiment to test whether a machine could imitate human behavior: a judge, a human, and a computer are placed in separate rooms, and the judge must determine which of the other two is human, using only written questions. This became known as the Turing test.

In 1942, Isaac Asimov formulated his Three Laws of Robotics, establishing behavioral constraints designed to prevent autonomous machines from harming human beings.

By 1956, John McCarthy had coined the term artificial intelligence to describe the goal of building intelligent machines and algorithms. Optimism ran high — perhaps too high. Some researchers predicted that AI would be commonplace by the 1970s, a forecast that proved premature.

Early Neural Networks

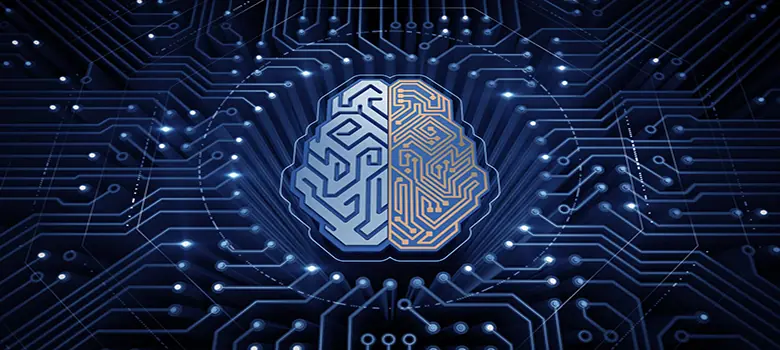

In 1957, Frank Rosenblatt developed the first simple neural network algorithm: the perceptron. His work built on research by Santiago Ramón y Cajal and Charles Scott Sherrington into how the human brain and neural networks function.

Rosenblatt also drew on the 1943 model proposed by McCulloch and Pitts, which introduced the first modern neural network unit as a minimal processing element. By the late 1950s, a machine had been built that could learn to classify images of men and women — a genuine milestone at the time.

An illustration of the first concepts in artificial neural networks.

An illustration of the first concepts in artificial neural networks.

In 1966, Joseph Weizenbaum created ELIZA, widely regarded as the world's first chatbot. It incorporated natural language processing, responded using scripted phrases, and was designed to explore how computers might communicate with humans.

In 1979, one of the earliest autonomous vehicles in history successfully navigated a space filled with obstacles on its own — the Stanford Cart.

The Cycles of Progress and Setback

In 1980, history repeated a familiar pattern. Japan's ambitious Fifth Generation Computer project fueled a surge of interest in expert systems, but the initiative fell short of most of its goals. The field entered another slowdown through the 1990s — what researchers sometimes call an "AI winter."

The turning point came in 1986, when Rumelhart, Hinton, and Williams published their landmark paper introducing backpropagation: a method for computing the gradient of a cost function with respect to a network's weights far more efficiently than any brute-force approach attempted before. This was a pivotal moment. Much of the processing capability we rely on today traces directly back to that research.

In 1997, IBM's Deep Blue defeated world chess champion Garry Kasparov — demonstrating, for the first time to a broad audience, that a computer system could beat the best human player in the world. It fundamentally shifted how the technology industry and the general public thought about AI's potential.

The Deep Learning Era

In 2012, Google scientists built a neural network across 16,000 computers with one billion connections and let it explore YouTube videos using deep learning algorithms. Without being told what a cat was, the network began identifying cats in images on its own — and independently learned to recognize human faces and bodies as well.

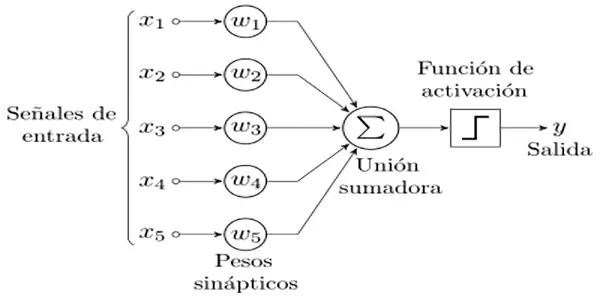

In 2014, a conversational bot named Eugene Goostman — modeled as a 13-year-old Ukrainian boy — convinced 30 out of 150 judges during a Turing test that it was human.

Some of the most significant milestones in the history of artificial intelligence.

Some of the most significant milestones in the history of artificial intelligence.

In 2016, Microsoft launched a Twitter chatbot named Tay, designed to learn through interactions with users. Within hours, coordinated abuse by trolls had pushed Tay into posting racist and inappropriate content — a stark demonstration of the risks of unconstrained learning from public input.

Also in 2016, Google DeepMind's AlphaGo defeated the reigning world champion at Go — a Chinese strategy game over 4,000 years old, with a complexity that had long been considered a uniquely human domain. AlphaGo combined machine learning techniques with tree-search algorithms, trained on both human and computer gameplay.

The following year brought an even more remarkable result: AlphaGo Zero, trained for just 40 days and given no prior human gameplay data, defeated every previous version of AlphaGo — each of which had already beaten the world's best human players.

That covers the major turning points in AI's history. It's a vast subject, and we'll continue unpacking it in the next installment.

Johautt Hernández jhernandez@innotica.net